Arizona State University soft launched a web app earlier this month that allows anyone, for $5 per month, to create an apparently unlimited number of customized “learning modules” using artificial intelligence. The AI chatbot, called Atom, uses online instructional materials from ASU professors to create a course that’s tailored to the goals, interests and skill level of the user. After asking a handful of questions and processing for about five minutes, Atom debuts a personalized course that includes readings, quizzes and videos from a half dozen experts at ASU.

But several professors whose content Atom pulls from were surprised to learn that their materials—including video lectures, slide decks and online assignments—were being perused, clipped and repackaged for these short online course modules. The faculty wasn’t told anything about the app, ASU Atomic, they said.

(SIDE NOTE: I so DESPERATELY want to use a video clip here from “Ted 2” that smack talks Arizona State right now, given how stupid this situation is, but I think the editors at Sage might pop a brain bleed. The tamest thing said in that exchange was, “Do you say Arizona State University or just HPV-U?” Anyway… I digress…)

BACKGROUND: The university is doing everything to both say that tapping the braintrust of the faculty through this AI thing is the greatest thing on earth while also telling faculty this is just experimental and there’s no real concern here.

As with most things administrators SWEAR aren’t problems, the faculty members refuse to buy this bull-pucky:

As is the case for many AI chatbots still in their infancy, Atom gets things wrong. In the module it designed for Hanlon, it included clips from an old lecture he gave focused on the work and career of 20th-century literary theorist Cleanth Brooks. Throughout the course it called the critic “Client” Brooks.

<SNIP>

Ostling is worried that Atomic “will start being used widely, and I have content on my Canvas shelves that would be very inappropriate to show up without context in a course,” he said. “Not only do I think the students will be poorly served because they might learn things that aren’t true, but it could potentially get me in trouble.”

I’m feeling this as well, given that I often have students interview other students for classroom-only exercises that get posted to Canvas. So, for example, a student talking about their experience at the local Pub Crawl might not be all that thrilled if that info becomes part of a database of content for everyone to see.

Even more, I have to occasionally create “alternative timeline scenarios” for the students. For example, to have my students write an “announcement press release,” I make up the scenario that our current chancellor resigned a while back, the university did a search and today is announcing the hiring of the next chancellor. It’s a logical scenario that would be something students might be expected to do as PR practitioners (hiring news release) and it forces them to focus on what to include in a short space.

However, I obviously have made up the name of the person we hired as well as that person’s background and accomplishments. If AI slurps it up and treats it as gospel, that’s not going to be good for anyone involved.

This all led me to today’s throwback post about our system trying to steal faculty content for what I would assume could be a situation like this. Even if the Universities of Wisconsin folks double-pinky promise not to turn my work into AI slop, I still don’t want them co-opting my life’s work for all the reasons listed below.

I did a check on how this is going and the board of regents hasn’t passed this yet, but I’m always leery of summer months, as that’s a great time for universities to pass these “take out the trash” bills, because nobody’s looking.

The Universities of Wisconsin System is trying to steal faculty’s copyright rights to educational material. Please help fight this stupid power grab.

(The system says, “We would never look to diminish your rights or take your hard-earned work away from you.” What the system actually does is more accurately depicted in the scene above.)

THE SHORT, SHORT VERSION: The Universities of Wisconsin System is trying to rewrite its copyright policy and assign itself the rights to the educational work and scholarly materials faculty create. If this goes through, faculty who have spent years building and improving their courses could get the shaft and I have no idea if I’ll be able to share stuff that I’ve always shared with you.

If you think this is as stupid as I do, please email system President Jay Rothman at president@wisconsin.edu and tell him not to let this policy pass.

(UPDATE: Rothman is no longer the president, but that email address will still get you where you need to go.)

THE LONGER, MORE NUANCED VERSION: Here’s a deep dive on the way the system is trying to recreate its copyright policy in a way that disenfranchises its faculty:

THE LEAD: The Universities of Wisconsin has decided to rewrite its rules involving intellectual property, giving the system total ownership over pretty much everything faculty create:

The UW System is proposing a new copyright policy that professors say would eliminate faculty ownership of instructional materials. The revisions are stoking alarm among professors statewide who say such a move would cheapen higher education into a mass-produced commodity.

“This policy change is nothing less than a drastic redefinition of the employment contract, one that represents a massive seizing of our intellectual property on a grand scale,” professors from nine of the 13 UW campuses wrote in a recent letter to UW System President Jay Rothman. “It would allow any UW campuses to fire any employee and nonetheless continue teaching their courses in perpetuity with no obligation to continue paying the employee for their work.”

Aside from owning faculty syllabi, lecture notes and exam materials, UW would also have ownership rights over the scholarship faculty create:

A draft of the new policy, obtained by the Milwaukee Journal Sentinel, would eliminate existing copyright language and replace it with the assertion that UW System holds ownership of both “institutional work” and “scholarly work.”

<SNIP>

“Scholarly work” includes most of what professors produce, such as lecture notes, course materials, journal articles and books. The UW System transfers copyright ownership to the author, as is customary in higher education, but notes that it “reserves” the right to use the works for purposes “consistent with its educational mission and academic norms.”

DOCTOR OF PAPER HOT TAKE: Given that I’ve got about a dozen textbooks in the field, I edit a journal that needs scholarly work to keep it running, I spent seven years crafting hundreds of blog posts and that I’ve built a ton of courses over my nearly 30 years of teaching, this was basically my calm, metered reaction:

I’ve already sent a copy of the proposal to Sage for its team of lawyers to go over, so I’m hopeful that I receive an answer along the lines of, “Calm down… Have a Diet Coke… This isn’t going to destroy what you’ve spent decades creating…”

In the meantime, let’s lay out how stupid and problematic this is:

The quality of your courses depend on the people you’re pissing off: We essentially went through this in my media-writing class today and a collection of sophomores and juniors understood it, so I’m hoping it might make sense to the Board of Regents.

I proposed the following scenario to one kid in the class: Let’s say you turned in a really good story as an assignment for this class. In fact, I thought it was so good, I took your name off of it, put my name on it and submitted it to the local paper. The paper then paid me $50 for the story.

I then asked the kid, “So, given that every time you turn in something good, I’m going to take it, put my name on it and make money from it, how likely are you to put forth your best effort in this class?”

The kid said, “There’s no way I’m going to do anything good for you anymore.”

Right. So, let’s play that out here: If every time I work REALLY hard on making good stuff for my class, the U is just going to claim it as its own, why would I bother to do anything more than the bare minimum to make my class work?

I guess you could make the argument that pride in our work and a desire to make things better for our students could inspire us to do great things, even in the face of a naked power grab by the system, but if you’re going to treat us like mercenaries, we’re going to behave that way.

This will stifle innovation, limit interest in developing new courses and create a general sense of animosity among faculty. It will also likely inspire professors to find new ways to hide stuff from the administration folks, as one person on social media suggested to me:

This stuff isn’t a product, but rather a process: Inherent to the system’s argument is the basic premise of work product: You built this stuff while you were employed by us and required to do so. Therefore, since we paid you for this, the stuff is ours.

That works in the private sector, where we’re tasked with specific outcomes and granted special provisions to create this kind of work product. For example, I know that when I worked at the Wisconsin State Journal, I wrote a lot of articles that the paper published. Implicit in my employment agreement was the premise that I was acting on behalf of the paper, writing things that the paper tasked me to write and publishing those things in a copyrighted publication. They own that stuff and I’m cool with that. I don’t think I’m ever going to want to republish a weather story I wrote in 1996, and if I did something cool I wanted to show my students, that’s acceptable use.

However, when it comes to my media-writing class, I didn’t get hired to write lecture notes and syllabi for that class. In fact, what I wrote was a tweaked version of something I’d been working on for decades. I’d drafted some of this conceptual stuff when I was working at UW-Madison, improved upon it when I was at Mizzou, reconfigured it at Ball State and then adapted it here. This isn’t like you hired me to bake a cake for your birthday. This is a tree I’ve been growing and tending for years and years.

The material might not be UW’s to steal: Even if you don’t buy the argument above, the instructors might not own the material they’re using in the first place.

Textbook publishers aren’t just sending out desk copies of a dead-tree books and telling fledgling professors, “Vaya con Dios.” They actually build a ton of back-end stuff into the educational packages they provide these days, which includes a lot of the stuff the system is trying to get its grubby little paws on.

I know for my books at Sage, we have sample syllabi, PowerPoint slides for lectures, notes for instructors, exercises and test banks crammed with questions. I might even be forgetting some of the stuff we provide.

(Shameless Plug: Sage really is amazing when it comes to this kind of stuff. If you ever need a book, check these folks out first, especially if you need some help with the shaping and molding of the entire class experience.)

These things are available to instructors because Sage built them to go along with the authors’ textbooks. The professors can use them as they are, add stuff, cut stuff or otherwise tweak what they receive. That said, it’s not theirs to sell or give away. Sage holds the copyright for this stuff and I imagine Sage and the other book publishers who pour a ton of time and resources into building these things would be more than a bit peeved if the UW System tried to claim it as its own.

The Coy and Vance Duke Theory of Education: When I was a kid, I loved “The Dukes of Hazzard” television show, which ran every Friday for about seven or eight years. The show involved two cousins, Bo and Luke Duke, getting into scrapes with the corrupt law enforcement of Hazzard County and doing amazing car chases in their 1969 Dodge Charger. Along with patriarch Uncle Jesse Duke and the lovely cousin Daisy Duke, the boys were “makin’ their way, the only way they know how,” to quote the theme song.

It was a simple show that drew a good audience and it seemed to work well. However, around the fifth season, John Schneider and Tom Wopat (who played Bo and Luke, respectively) got into a contract dispute with the studio over salaries. Rather than pay them and move on with life, the studio had the idea in its head that the car (the General Lee) was actually the star of the show, so it didn’t matter who was driving it and that they didn’t need these two pretty boys at all.

Enter new cousins: Coy and Vance Duke.

If ever there was a knock-off of a brand name, this was it. Like the original Duke Boys, one was blonde, one was brunette. They essentially wore the same wardrobe, had the same catch phrases and did the same insane driving stuff. That said, the ratings took a dump and after one season, Bo and Luke “returned from driving the NASCAR circuit” and Coy and Vance ended up fading from memory.

What the universities are doing here is essentially the same kind of thing. They figure, “Well, hell, if we have the notes, the syllabus and the PowerPoint slides, we don’t really need the professor who created them at the front of the room.” These folks assume that once we decide to leave, retire or whatever, they can just plug in an adjunct at a fraction of the cost and things will run like a Swiss watch. And that’s not just me being paranoid, as other folks see it as well:

I pretty much know my notes aren’t going to be helpful to other people as I wrote them based on a lot of my experiences in the field. Notes like (BUS FIRE STORY GOES HERE) or (EXPLAIN DRUG DEALER SHOT THING) probably won’t work for a random Coy or Vance they bring in to teach my class after they decide they don’t need me anymore.

HERE’S WHY YOU SHOULD CARE: One of the biggest reasons I’m worried about this is because it impacts what I can do with my materials. That’s also the main reason why I think you should care about it, too.

I never took this job to get rich and I certainly don’t like the idea of coming across like Daffy Duck when he found the treasure room:

However, when I know stuff is mine to do with as I please, that tends to benefit a lot of other people as well. Whenever someone shoots me an email and says, “Hey, how do you organize your class?” I’m always happy to give them a copy of my syllabus. When someone needs an assignment I’ve built, I’m glad to share it with them or on the blog.

When we went into COVID lock down, I basically dumped everything I ever did that I thought would help people into the Corona Hotline section of the blog for free. All those goodies remain there to this day, so feel free to help yourself.

If this policy passes, I might not be as free to offer that kind of generosity any more, and that would really tick me off.

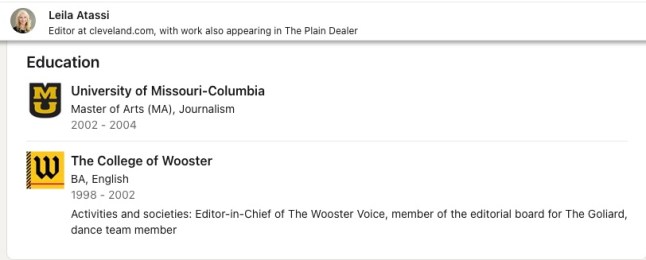

Among the names listed in the byline of this story is Jaimi Dowdell, one of the pros from the “Dynamics” textbooks and

Among the names listed in the byline of this story is Jaimi Dowdell, one of the pros from the “Dynamics” textbooks and